Generative AI has roots reaching back to the 1960s, when Joseph Weizenbaum created ELIZA, an early natural language program designed to mimic empathetic conversation. Generative AI refers to certain machine learning models that can create new content, such as text, images, audio, or code, by learning patterns from large amounts of data and using those patterns to produce original outputs in response to user prompts.

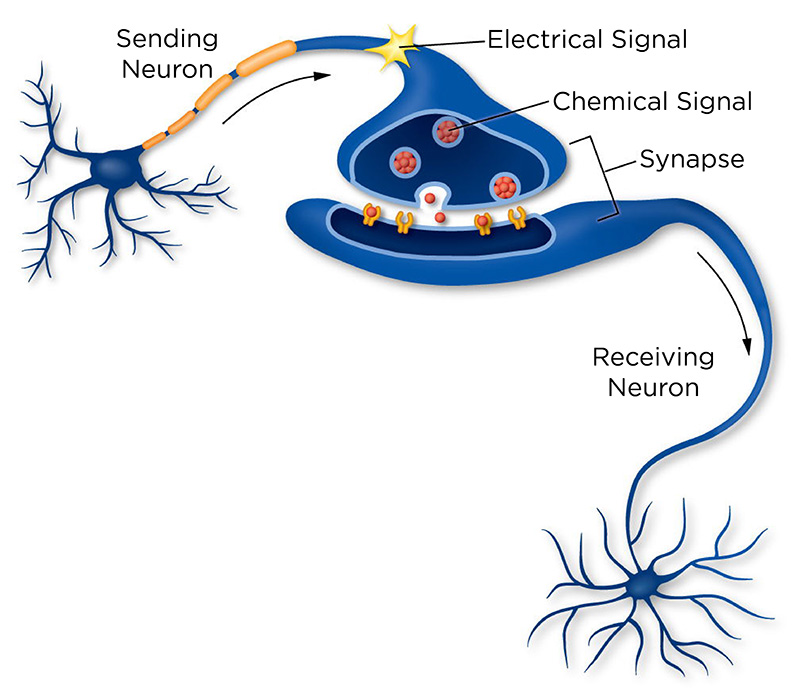

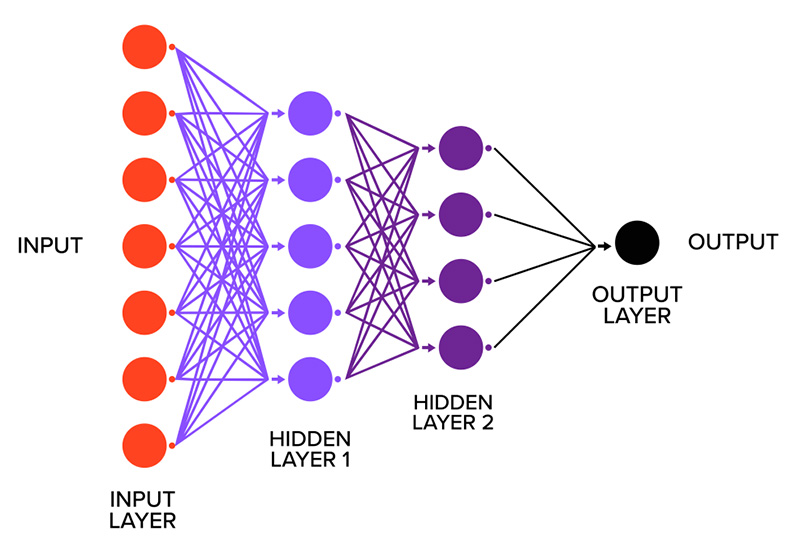

To understand the recent growth and potential impacts of generative AI, and how it works, it is helpful to examine how models referred to as artificial neural networks (ANNs) evolved into the models used today. These models were inspired by how the human brain works, where billions of connected neurons pass signals that allow us to think, move, and make decisions. ANNs use a simplified version of this idea, using small units (“neurons”) stacked in layers that pass information and learn patterns from data.1 A major turning point came with the rise of deep neural networks, which use many layers and can learn far more complex patterns than earlier models. As ANNs evolved, they were increasingly used to implement two long-standing data modeling approaches: discriminative and generative models. Discriminative models focus primarily on prediction tasks, learning relationships between inputs and outputs to classify or separate outcomes. Generative models, by contrast, aim to learn how data itself is produced by capturing the underlying data distribution, making it possible to generate new examples that resemble the original data.2

After a series of developments in crucial models—including variational autoencoders (VAEs), generative adversarial networks (GANs), diffusion models, and transformers—the ability of AI systems to generate realistic data at scale expanded significantly. A breakthrough for generative models came with the emergence of foundation models and, ultimately, today’s large-scale generative AI systems. Unlike earlier task-specific models, foundation models learn general patterns by solving millions or billions of “fill in the blank” tasks, such as predicting the next word in a sentence or the next part of an image. Through this process, the model learns compact and meaningful representations of concepts, patterns, and relationships in the data. These representations act like internal maps that show how different pieces of information relate to one another. Training such models is highly computationally and resource-intensive. Once they learn strong representations, they can be used to generate new content, including text, images, and code. These foundation models form the basis of what we now call generative AI.

In November 2022, the launch of ChatGPT catalyzed the mainstream adoption of generative AI. Since then, major technology companies have rapidly integrated generative AI tools across widely used platforms, including Microsoft 365 Copilot, Meta AI, and Google’s Gemini in Google Workspace and Search. Beyond productivity and search tools, generative AI is increasingly used in healthcare and education. Ambient AI, for example, generates clinical documentation from clinician-patient conversations, and tools like Khan Academy’s Khanmigo support grading and tutoring. As a result, generative AI is becoming increasingly integrated into daily life and reflects a kind of default AI that is used even when users do not actively seek it out, and in ways that they cannot always opt out of, transforming how people interact with digital platforms. This growing integration has raised questions among the public, practitioners, and policymakers about how generative AI should be governed to ensure transparency, accountability, and public benefit while minimizing risks and maintaining trust.

Information Processing in Brains (top) and Deep Neural Networks (bottom)

SOURCE: “Neurons Transmit Messages In the Brain,” University of Utah, https://learn.genetics.utah.edu/content/neuroscience/neurons/ (top); “Deep Learning vs. Neural Network: What’s the Difference?,” Smartboost, https://www.smartboost.com/blog/deep-learningvs-neural-network-whatsthe-difference/ (bottom).

Potential Impacts or Issues of Concern

While the integration of generative AI assistance can be useful, there are also impacts and consequences—intended or not—worth noting. Some of them are listed below:

- Bias—Bias can occur from training data or the values embedded by the system’s creator. Because LLMs are trained on extensive and diverse datasets, they can mirror their embedded and broader societal biases. User-driven confirmation bias can also arise, as prompts or phrasing shape model response, and in extended conversations, earlier responses can reinforce assumptions over time.

- Ownership—Because data is essential to generative AI, it is important to consider ownership and authorship. Because AI outputs lack copyright protection, they can appear like ownership-free content, despite being derived from preexisting work, often without consent, credit, or compensation (known as the 3 C’s). These raise questions regarding intellectual property, particularly for creative workers.

- Overreliance—While generative AI improves efficiency by quickly answering complex questions, it also creates the risk of overreliance. Users may adopt cognitive shortcuts, prioritizing convenience over scrutiny, which can impede skills such as creativity, critical thinking, and problem-solving, and reinforce automation bias due to habitual acceptance of AI recommendations. Among younger cohorts, such as teenagers and high school students, the long-term effects of overreliance may differ from those of older learners, with potential implications for cognitive development. Students in this age group may also turn to AI for digital therapeutic support, which could delay seeking care from qualified human professionals.

- Misuse—Like most technology, generative AI is susceptible to misuse by users with malicious or immoral intentions, often exploiting existing capabilities with minimal technical expertise. One prominent example is deepfakes, in which faces, voices, or texts are manipulated to generate inappropriate, sexualized, or defamatory images, videos, or audio, posing a risk to individuals, institutions, and public trust.

- Environmental Health Impacts—As AI use scales, its environmental footprint grows. Data centers, which house computer servers, data storage systems, and networking equipment needed to train, deploy, and operate AI systems, are energy-intensive, contribute to carbon emissions, and require substantial amounts of water for cooling.

ABOUT THE AUTHOR(S)

Sadia Rahman is a New York State Science Fellow at the Rockefeller Institute of Government.

Nikaley Castillo is a research assistant at the Rockefeller Institute of Government.